AI Edge Computing in Industrial Automation: What Manufacturers Need to Know

Table of Contents

What Is AI Edge Computing?

AI edge computing refers to running machine learning inference workloads locally on hardware deployed at the factory floor — at the "edge" of the network — rather than sending data to a remote cloud server for processing. This eliminates latency (from 100–500ms in cloud-based AI to <10ms at the edge), reduces bandwidth costs, and keeps sensitive production data within the factory.

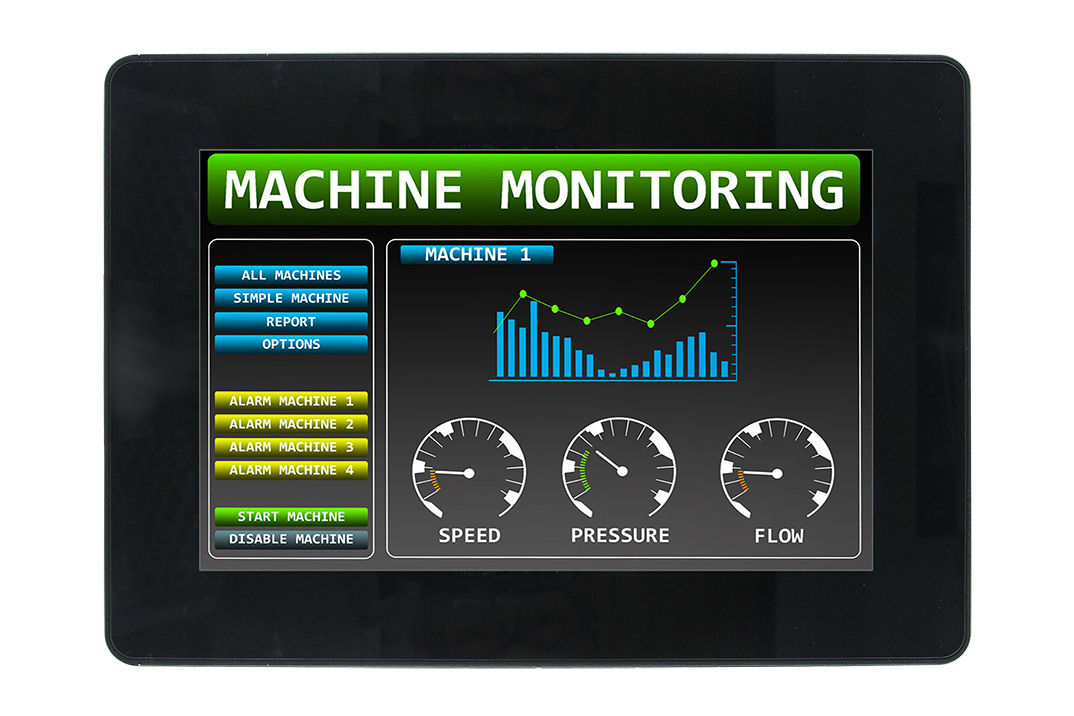

Industrial AI Applications

- Visual quality inspection — cameras + AI detect surface defects, dimensional errors, and label misalignment at production-line speeds

- Predictive maintenance — vibration, temperature, and current sensors analysed by AI to predict bearing failure 2–4 weeks before it occurs

- Process optimisation — AI models adjust process parameters in real time to maintain yield and reduce waste

- Anomaly detection — AI identifies unusual patterns in sensor data that precede equipment faults

AI Edge Hardware: What You Need

AI inference workloads require dedicated AI acceleration hardware:

- GPU (NVIDIA Jetson, RTX) — highest performance; ideal for vision AI with multiple cameras

- Intel NPU (Neural Processing Unit) — built into Intel Core Ultra processors; excellent for moderate inference workloads without a discrete GPU

- Intel OpenVINO — software toolkit that runs AI models on Intel CPUs, GPUs, and VPUs

- Hailo AI accelerator — PCIe or M.2 AI inference card for adding acceleration to existing platforms

Avalue AI Edge Platforms from TSL Automation

TSL Automation supplies Avalue industrial AI box PCs and motherboards with Intel Core Ultra processors (including integrated NPU), PCIe GPU expansion slots, and high-speed camera interfaces. Contact us to design an AI edge computing solution for your quality inspection or predictive maintenance application.

Frequently Asked Questions

What is AI edge computing in manufacturing?

What are the main industrial applications of AI edge computing?

What hardware is needed for AI edge computing on a factory floor?

Is AI edge computing better than cloud AI for factories?

Can TSL Automation supply AI edge computing hardware for India?

TSL Automation Solutions

Head of Marketing, TSL Automation Solutions

Sanjana covers industrial automation trends, product launches, and technology insights for TSL Automation Solutions, a Mumbai-based distributor of HMI, Panel PC, and embedded computing systems serving manufacturers across India and globally.

Need help choosing the right product?

Our team in Mumbai can recommend the right HMI, Panel PC, or embedded system for your application.

Contact TSL AutomationRelated Products

Related Articles

Fanless Industrial PCs: Why Manufacturers Are Making the Switch

Feb 17, 2026

Industrial PCs in Pharmaceutical Manufacturing: GMP Compliance and Hardware Selection

Jan 20, 2026

HMI for Extreme Temperatures: How to Choose an Industrial Panel for Cold Storage and High-Heat Environments

Nov 4, 2025